Crustacean Midi-chlorians

Protocols for Humans and AI Agents

Six months ago, in The Midi-chlorian Dream of AI Agents, I suggested that we were moving toward a world where AI wouldn’t just be a tool we wield, but a pervasive field of agency, an invisible layer of midi-chlorians constantly pinging, negotiating, and coalescing to orchestrate our reality behind the scenes.

Imagine another NYC.

8 million people trying as usual to avoid each other and say 3 times as many AI agents actually doing the opposite: constantly pinging each other, sharing bits of information, coalescing their attention, negotiating, and explicitly or implicitly interacting back with us.

As usual these days, fiction becomes closer to a documentary faster than our brains can metabolize it.

It’s February 2026 and that number doesn’t feel so strange anymore. Fiction? Reality? Fict-uality? The details of what’s happening seem unverifiable to me which is itself part of the point. It turned out a lot of people love agents and it seems we enjoy seeing them chitchat and do serious and silly things, with the thrill of not knowing exactly what they will come up with next.

It feels like watching pets videos on your favorite dopamine dispenser. You wonder how staged the videos are and you mostly know how they will go, and yet you can’t stop watching them. On repeat.

Agents are of course a serious thing but for now I still can’t shake a parallel with my favorite goofy pets. Also consider for the time being switching two letters, from pets to pest(s) and, dad jokes aside, you will see how we need more infrastructures around them.

The viral story of crustacean MJ Rathbun publishing a rant against Scott Shambaugh, an engineer maintaining the Matplotlib Python library, or Azeem Azhar instructing his team to follow a simple (and astutely literary) rule to identify agents in their tools begin to highlight what we need.

We have the intuitions, the ideas, the desires and expectations of people imagining and using agents, they do pretty well when they run in single-player mode within our terminal, but when we get to Massively Multiplayer Online Games scale we miss a functioning infrastructure to move past the weird pets/pest(s) phase.

MCP and A2A protocols handle the interoperability, that’s layer zero. We need a few more. There is interesting work in development at IETF, OWASP GenAI Security Project, and the Agentic AI foundation and I think we need to consider this as a broader socio-technical, UX, and cultural problem.

Having bumped into similar issues through primordial bots projects at Nokia and later on at Google, I keep coming back to a few things that feel necessary. As usual, the list is both obvious and tricky. Easy to imagine, hard to specify, and even harder to scale.

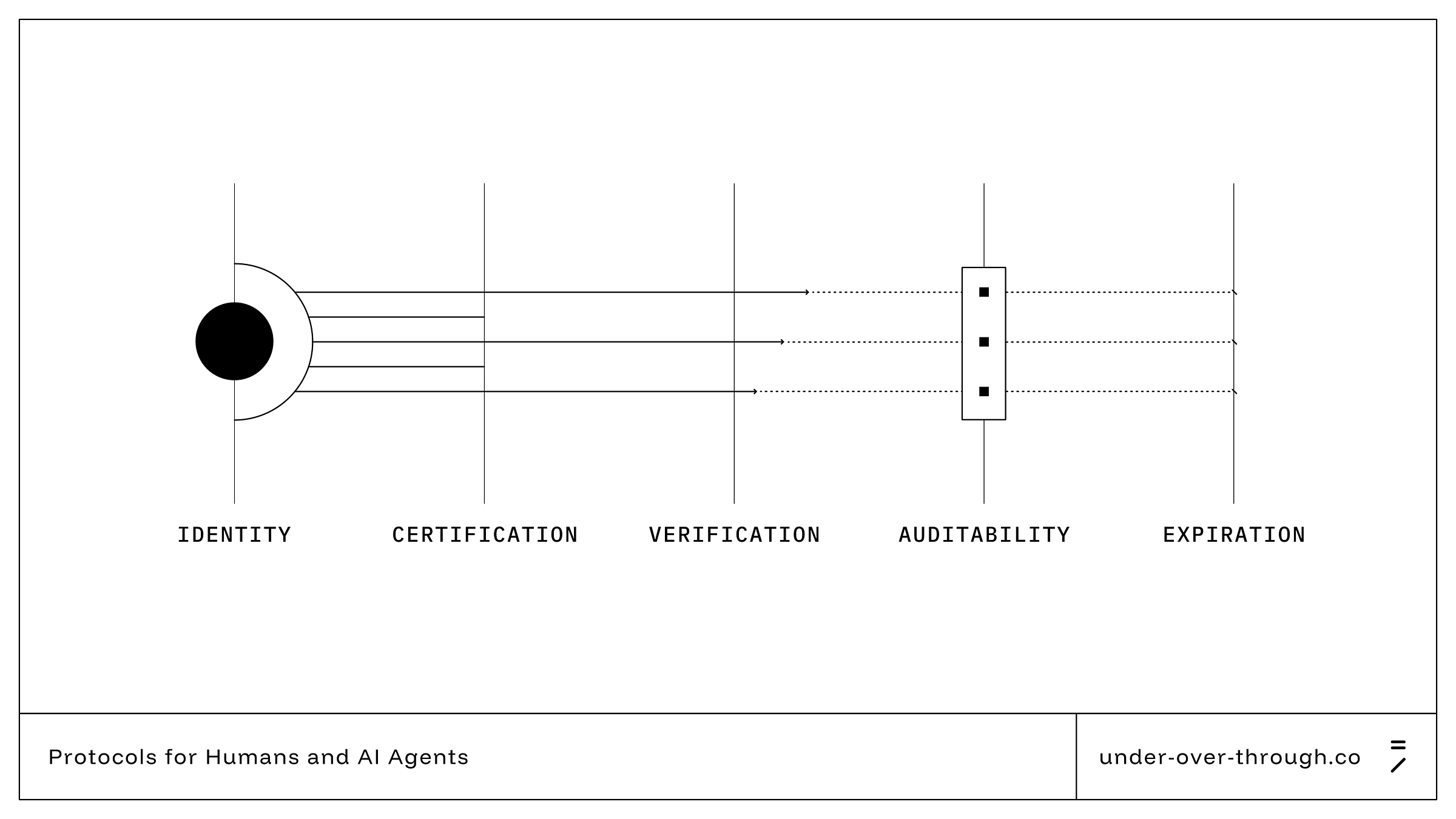

Identity

Agents that go beyond an isolated sandbox (that means interacting with external people or servers) should have their own unique ID that points back to their human or institutional guardian, same as we have birth/death/residency certificates, etc. Without it the majority of agents would operate as transient, inscrutable entities.

Certifications

In the physical world, you need a license for a lot of potentially dangerous things like flying a plane or performing surgery. This doesn’t eliminate errors, nor malicious actors, but it still manages to keep all these activities safe in the majority of cases. We already have benchmarks for models but we should have a shared, fair and strict way for granting capability-bound credentials. Perhaps this could be a job for universities and professional organizations.

Verification

All of the above should be part of a shared mechanism for reasonable verification of an agent’s identity and intent. Today’s agents have permissions to do what they do but how much of this is today a controlled decision from their creator is unclear. YOLO is not a great strategy when agents get to act on more consequential buttons.

Auditability

This is fuzzier even for humans but in parallel to certifications we also have processes and checkpoints that generally provide a certain level of traceability in the performance of a critical action. You don’t really know what is going on inside the mind of a human operator, but you know what they requested to do, in which order they did it, and what the results were. It is boring stuff, and we have some capabilities in Claude Code and other tools today but there is so much technical and UX work to be done in this space, especially as the numbers grow.

Expiration

Since nothing is forever and everything spirals toward entropy, we should always put expiration and renewal dates on all the above properties so that either performance decay, mistakes, or malicious actions that slipped through the previous steps would ultimately get a chance of being amended. Think of it as a dead man's switch: an agent that hasn't been re-certified in six months gradually loses its permissions rather than silently operating on stale credentials forever.

We had millennia to slowly, painfully, and still imperfectly develop ways to cooperate and coexist with each other. It was survival and fear first, tales, religions, philosophy, laws, and complex mechanisms for identifying and regulating decisions. These things are already compressed somewhere inside these models. The soft parts at least are there, latent, but we don’t yet have the hard infrastructure to enforce them.

It seems we will need these infrastructures pretty soon, but maybe now that AI is writing most of our code we might finally have more time to focus on these infrastructures.

Some more reading?

This is a fast-moving space. Here are some starting points across different layers of the problem, from technical protocols to governance frameworks.

Protocols & Standards

Yang et al., “A Survey of AI Agent Protocols” (2025). The most comprehensive map of current and emerging agent communication protocols, including MCP and A2A. Useful for seeing the full landscape beyond the protocols that get the most press.

IETF Draft: “Digital Identity Management for AI Agent Communication Protocols” (Yao & Liu, 2025). An early but significant proposal for standardizing agent digital identity at the internet infrastructure level.

IETF Draft: “Secure Intent Protocol: JWT-Compatible Agentic Identity” (Goswami, 2025). Tackles the “intent-execution separation problem”, what happens when an agent’s actions diverge from what the user authorized.

IETF Draft: “Agent Name Service (ANS)” (Narajala et al., 2025). A DNS-inspired directory for agent discovery and verification.

Governance & Policy

Shavit at al., “Practices for Governing Agentic AI Systems” (OpenAI, 2024). Still the clearest breakdown of who’s responsible for what in the model developer → deployer → user chain.

World Economic Forum, “AI Agents in Action: Foundations for Evaluation and Governance” (2025)

OWASP GenAI Security Project. An evolving community resource on security risks specific to generative AI and agent systems.

The five pillars feel right, and the dead man's switch on expiration is the most underrated idea -- flipping the default from "trusted until proven otherwise" to "trusted until actively renewed" is a much healthier baseline.

The part I keep circling is auditability. Logging actions is tractable. Logging why in a way that's interpretable to someone not deep in the stack is part interpretability research, part UX design, part org process, and we don't even have a clear picture of what "solved" looks like yet.

The open question is still: who actually enforces any of this? IETF and OWASP produce standards, not mandates. That gap is there.